A package manager is a program to help organize software libraries and tools into instances, namespace, silos, or environments that make it easier to work with multiple libraries and versions.

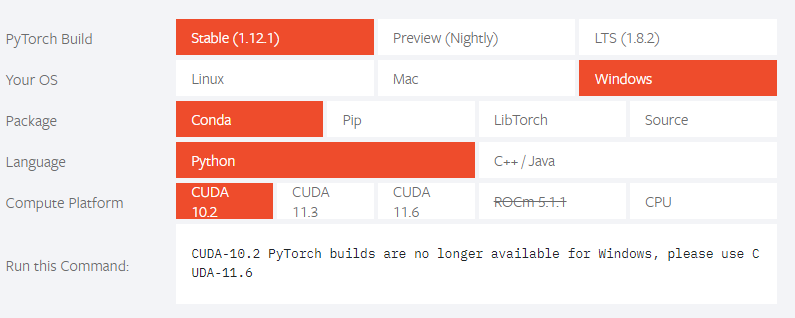

In machine learning, when a user works with multiple libraries and programs, keeping track of versions that play nicely together is a must. Python has a few dozen versions available, PyTorch has “Stable 1.3 and Nightly,” TensorFlow has a dozen 1. x versions and 2.0’s. Organizing libraries into silos is best done with a Python package manager. There are several available to programmers; selecting one over the other is just a matter of taste.

Yufeng Guo, Google Developer Advocate has presented a high-level overview of working with Python packages. Here is a summary of his talking points:

- Pip: Python’s built-in package manager that has been available for some time. The tool can be used to install programs like TensorFlow, NumPy, Pandas, Jupyter, and many more, including their dependencies easily

- “There are two packages for managing PiP environments”

- Anaconda: Python distribution that works well for data science projects. “Brings together many of the tools in data science and machine learning in just one install”. Used to create different environments for different projects. Its package manager is called Conda.

- Virtualenv: “a package that allows the creation of named virtual environments where Pip packages can be stored in an isolated environment”

- Pyenv: a program (library) that sits on top of Virtualenv and Anaconda. It can be used to control both programs and different versions of Python

Python Libraries

There are several Python libraries available as open-source projects. Some of the more popular ones are NumPy, SciPy, Pandas, and Matplotlib.

Below is an illustration of the PyTorch stack.

Below is a high-level summary of four popular libraries.

Numpy

NumPy is a standard in the scientific community. It contains a large set of mathematical functions and features, and it’s fast. In one instance, a blogger created a test that “initialized 14 arrays of the size 40000 X 40000, one million times,” and NumPy outperformed PyTorch, but not by much. In the scientific community, NumPy is known as the ideal tool for working with multi-dimensional arrays and matrices (matrix-like column/rows).

SciPy

SciPy (Scientific Python) is a collection of Python libraries used for scientific computing and technical engineering. The SciPy ecosystem was designed to work with NumPy, Matplotlib, Sympy, Pandas, and IPython. One of the packages in the collection is the SciPy Library which comes with the following modules:

- Numerical integration

- Fourier transforms

- Signal processing and image processing

- ODE solvers

- Interploation

- Optimization

- Linear algebra

- Statistics

Pandas

Pandas is a Python library built on top of NumPy used for data analysis and data manipulation. Previously, we described how Pandas were used for data preparation. It can ingest raw data, clean it up, especially if there are missing values, then transform it. Here is a short list of Panda features:

- Deal with missing data

- “Converts ragged, differently-indexed data in other Python and NumPy structures

- Supports slicing, subsetting, merging, joining, and fancy indexing

- Supports pivots and reshapes datasets

- “Performs split-apply-comnin operations on datasets”

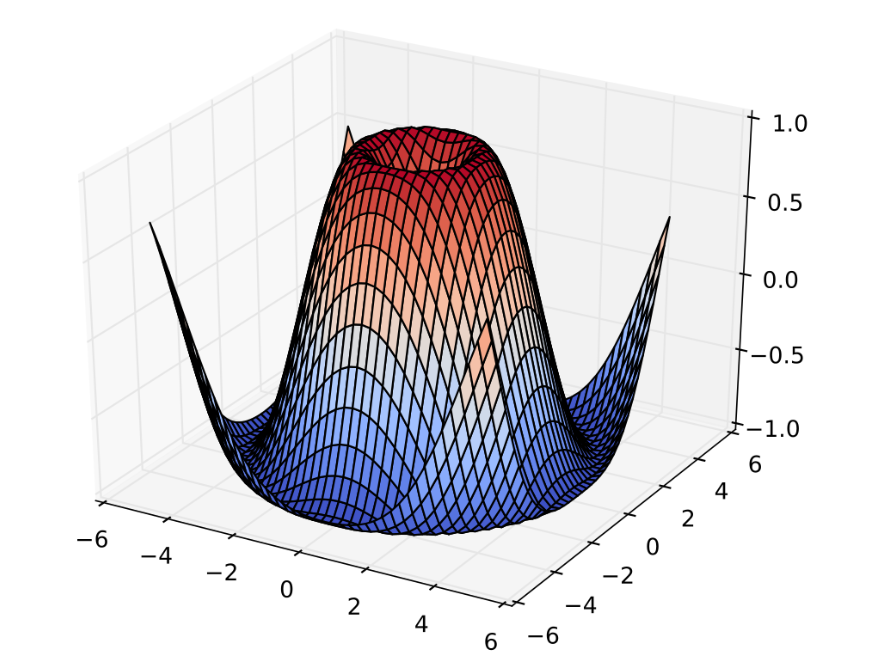

Matplotlib

Matplotlibe is a Python library used for 2D plotting. It can be used to “generate plots, histograms, power spectra, bar charts, error charts, scatterplots,” and more. Third-party packages can extend their capabilities and it comes with a large number of modules including Pyplot which gives it an interface similar to MATLAB.