-

Machine Learning

-

Data Infrastructure

-

Compilers

-

Python Tools

-

- Conda

- Dateutil

- Emacs Lisp

- List of Tools

- Matplotlib

- Numpy

- Pandas

- Pip Package Manager

- Plotly

- Pyenv

- PySpark

- SciPy

- Scrapy

- TQDM

- urllib

- Vim

- Virtualenv

- Show All Articles ( 2 ) Collapse Articles

-

File System Intro

0 out of 5 stars

| 5 Stars | 0% | |

| 4 Stars | 0% | |

| 3 Stars | 0% | |

| 2 Stars | 0% | |

| 1 Stars | 0% |

Intro

File systems work directly with disk drives (SSD, SAS, SATA) by dividing the storage space into equally sized compartments. An index keeps track of where all the files are stored in the different compartments. Windows supports NTFS and Linux several types.

- Windows 10: NTFS

- Linux: NTFS, FAT32, and Ext4. Ext4 is the default and popular choice

NTFS

- Support volumes of 8 petabytes

- File permissions and encryption on individual files

- Compress individual files

- Journaling file system

- Self-repairs during power failure

- Works only on Windows

Ext 4

- Developed for Linux

- Max volume size one exabyte

- Max file size is 16TB

- Journaling file system

- No Windows or Mac support

FAT32

- Supports 2TB drives

- Max file size is 4GB

- Compatible with different operating systems

- Used on USB drive, flash drives, memory card, digital cameras, etc.

- No built-in compression or encryption

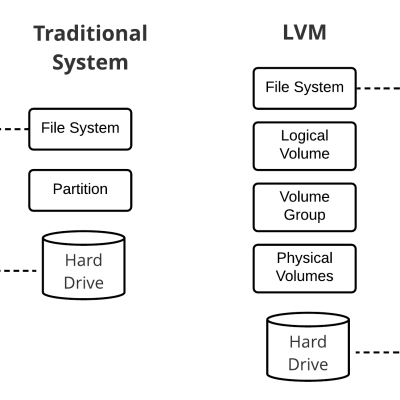

Logical Volume Manager

The LVM (logical volume manager) is an abstraction software used on Linux systems that sits in between the disk drives and file system. LVM adds a robust set of features to the file system that allows users to create volumes across disks and supports various RAID levels. Volume managers have been around for more than a decade. Some of the popular ones back in the day were the Veritas Volume Manager, HP-UX, and Sun Volume Manager. Now, it’s free and comes with various Linux distros. Some of the features include the following:

- One volume group can run on multiple drives

- Resize, create, and delete volumes on the fly

- Add disk drives and volume expands dynamically

- Supports snapshots, copy on writes and read/write snapshots

- Supports software RAID 0, 1, 5, and 6

Advanced File Systems

The advanced file systems start to blur the lines between basic file systems and distributed storage software. In fact, there will be some overlap in functionality like OpenZFS and Ceph, for example. More on that shortly. XFS and Lustre have been used in high-performance computing for more than a decade. Not only are they present in scientific research but also in supercomputing.

XFS File System

The XFS (extended file system) is supported by several Linux distros and is the default for Red Hat Enterprise Linux 7. It is a 64-bit journaling file system that uses allocation groups, which are contiguous space on hard drives vs blocks groups. Each allocation group contains an inode (index node) and free space acting almost as if it was its own file system. This setup allows an incredible amount of parallelism of I/O operations.

- Created by Silicon Graphics in 1993

- High-performance 64-bit journaling file system

- Supports most Linux distros

- Used in large enterprises

- Default file system for Red Hat Enterprise Linux 7

- Designed for high I/O and supports guaranteed I/O

- Direct I/O can bypass the kernel file cache, going from user buffer directly to hardware

- Reduces CPU load

- Max file system size 1 exabyte

- Ideal for very large file system implementations

- Used in scientific research projects like Cern and Fermilab with petabytes of storage

- Disk layout is partitioned into allocation groups, larger than block groups, max size 1 TB

0 out of 5 stars

| 5 Stars | 0% | |

| 4 Stars | 0% | |

| 3 Stars | 0% | |

| 2 Stars | 0% | |

| 1 Stars | 0% |